-

Kenya's economy faces climate change risks: World Bank

Kenya's economy faces climate change risks: World Bank

-

Stocks sink amid fears over US-Iran ceasefire

-

Premier League losses soar for clubs locked in 'arms race'

Premier League losses soar for clubs locked in 'arms race'

-

For Israel's Circassians, food and language sustain an ancient heritage

-

'Super El Nino' raises fears for Asia reeling from Middle East conflict

'Super El Nino' raises fears for Asia reeling from Middle East conflict

-

Pulitzers honor damning coverage of Trump and his policies

-

US-Iran ceasefire on brink as UAE reports attacks

US-Iran ceasefire on brink as UAE reports attacks

-

OpenAI co-founder under fire in Musk trial over $30 bn stake

-

Amazon to ship stuff for any business, not just its own merchants

Amazon to ship stuff for any business, not just its own merchants

-

Passengers stranded on cruise off Cape Verde following suspected virus deaths

-

What is hantavirus, and can it spread between humans?

What is hantavirus, and can it spread between humans?

-

Two dead as car ploughs into crowd in Germany's Leipzig

-

Demi Moore joins Cannes Festival jury

Demi Moore joins Cannes Festival jury

-

Two dead after car ploughs into people in Germany's Leipzig: mayor

-

Stars set for Met Gala, fashion's biggest night

Stars set for Met Gala, fashion's biggest night

-

France launches one-euro university meals for all students

-

Mysterious world beyond Pluto may have an atmosphere: astronomers

Mysterious world beyond Pluto may have an atmosphere: astronomers

-

Energy crisis fuels calls to cut methane emissions

-

Hantavirus: spread by rodents, potentially fatal, with no specific cure

Hantavirus: spread by rodents, potentially fatal, with no specific cure

-

Musk vs OpenAI trial enters second week

-

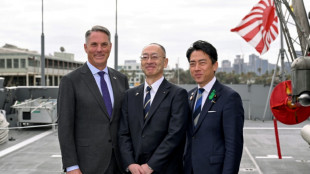

Japan PM says oil crisis has 'enormous impact' in Asia-Pacific

Japan PM says oil crisis has 'enormous impact' in Asia-Pacific

-

Seoul, Taipei hit records as Asian stocks track Wall St tech rally

-

Boeing faces civil trial over 737 MAX crash

Boeing faces civil trial over 737 MAX crash

-

Pacific Avenue Capital Partners Enters into Exclusive Negotiations to Acquire ESE World, Amcor's European Waste Container Business

-

Three die on Atlantic cruise ship from suspected hantavirus: WHO

Three die on Atlantic cruise ship from suspected hantavirus: WHO

-

Two die in 'respiratory illness' outbreak on Atlantic cruise ship

-

More Nepalis drive electric, evading global fuel shocks

More Nepalis drive electric, evading global fuel shocks

-

Latecomer Japan eyes slice of rising global defence spending

-

German fertiliser makers and farmers struggle with Iran war fallout

German fertiliser makers and farmers struggle with Iran war fallout

-

OPEC+ to make first post-UAE production decision

-

Massive crowds fill Rio's Copacabana beach for Shakira concert

Massive crowds fill Rio's Copacabana beach for Shakira concert

-

US airlines step up as Spirit winds down

-

Aviation companies step up as Spirit winds down

Aviation companies step up as Spirit winds down

-

'Bookless bookstore': audio-only book shop opens in New York

-

Venezuelan protesters call government wage hike a joke

Venezuelan protesters call government wage hike a joke

-

S&P 500, Nasdaq end at fresh records on tech earnings strength

-

Pope names former undocumented migrant as US bishop of West Virginia

Pope names former undocumented migrant as US bishop of West Virginia

-

Trump says will raise US tariffs on EU cars to 25%

-

ExxonMobil CEO sees chance of higher oil prices as earnings dip

ExxonMobil CEO sees chance of higher oil prices as earnings dip

-

After Madonna and Lady Gaga, Shakira set for Rio beach mega-gig

-

King Charles gets warm welcome in Bermuda after whirlwind US visit

King Charles gets warm welcome in Bermuda after whirlwind US visit

-

Coe hails IOC gender testing decision

-

Baguettes take centre stage on France's Labour Day

Baguettes take centre stage on France's Labour Day

-

Iran offers new proposal amid stalled US peace talks

-

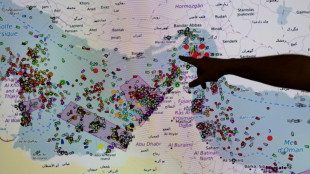

French hub monitors Hormuz tensions from afar

French hub monitors Hormuz tensions from afar

-

Oil steady after wild swing, stocks diverge in thin trading

-

Chinese swimmer Sun Yang reports cyberbullying to police

Chinese swimmer Sun Yang reports cyberbullying to police

-

Iran activates air defences as Trump faces congressional deadline

-

India's cows offer biogas alternative to Mideast energy crunch

India's cows offer biogas alternative to Mideast energy crunch

-

Crude edges up after wild swing, stocks track Wall St rally

OpenAI insiders blast lack of AI transparency

A group of current and former employees from OpenAI on Tuesday issued an open letter warning that the world's leading artificial intelligence companies were falling short of necessary transparency and accountability to meet the potential risks posed by the technology.

The letter raised serious concerns about AI safety risks "ranging from the further entrenchment of existing inequalities, to manipulation and misinformation, to the loss of control of autonomous AI systems potentially resulting in human extinction."

The 16 signatories, which also included a staff member from Google DeepMind, warned that AI companies "have strong financial incentives to avoid effective oversight" and that self-regulation by the companies would not effectively change this.

"AI companies possess substantial non-public information about the capabilities and limitations of their systems, the adequacy of their protective measures, and the risk levels of different kinds of harm," the letter said.

"However, they currently have only weak obligations to share some of this information with governments, and none with civil society. We do not think they can all be relied upon to share it voluntarily."

That reality, the letter added, meant that employees inside the companies were the only ones who could notify the public, and the signatories called for broader whistleblower laws to protect them.

"Broad confidentiality agreements block us from voicing our concerns, except to the very companies that may be failing to address these issues," the letter said.

The four current employees of OpenAI signed the letter anonymously because they feared retaliation from the company, The New York Times reported.

It was also signed by Yoshua Bengio, Geoffrey Hinton and Stuart Russell, who are often described as AI "godfathers" and have criticized the lack of preparation for AI's dangers.

OpenAI in a statement pushed back at the criticism.

"We’re proud of our track record of providing the most capable and safest AI systems and believe in our scientific approach to addressing risk," a statement said.

"We agree that rigorous debate is crucial given the significance of this technology and we'll continue to engage with governments, civil society and other communities around the world."

OpenAI also said it had "avenues for employees to express their concerns including an anonymous integrity hotline" and a newly formed Safety and Security Committee led by members of the board and executives, including CEO Sam Altman.

The criticism of OpenAI, which was first released to the Times, comes as questions are growing around Altman's leadership of the company.

OpenAI has unveiled a wave of new products, though the company insists they will only get released to the public after thorough testing.

An unveiling of a human-like chatbot caused a controversy when Hollywood star Scarlett Johansson complained that it closely resembled her voice.

She had previously turned down an offer from Altman to work with the company.

St.Ch.Baker--CPN