-

Kenya's economy faces climate change risks: World Bank

Kenya's economy faces climate change risks: World Bank

-

Stocks sink amid fears over US-Iran ceasefire

-

Premier League losses soar for clubs locked in 'arms race'

Premier League losses soar for clubs locked in 'arms race'

-

For Israel's Circassians, food and language sustain an ancient heritage

-

'Super El Nino' raises fears for Asia reeling from Middle East conflict

'Super El Nino' raises fears for Asia reeling from Middle East conflict

-

Pulitzers honor damning coverage of Trump and his policies

-

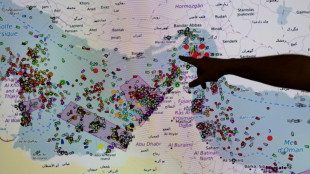

US-Iran ceasefire on brink as UAE reports attacks

US-Iran ceasefire on brink as UAE reports attacks

-

OpenAI co-founder under fire in Musk trial over $30 bn stake

-

Amazon to ship stuff for any business, not just its own merchants

Amazon to ship stuff for any business, not just its own merchants

-

Passengers stranded on cruise off Cape Verde following suspected virus deaths

-

What is hantavirus, and can it spread between humans?

What is hantavirus, and can it spread between humans?

-

Two dead as car ploughs into crowd in Germany's Leipzig

-

Demi Moore joins Cannes Festival jury

Demi Moore joins Cannes Festival jury

-

Two dead after car ploughs into people in Germany's Leipzig: mayor

-

Stars set for Met Gala, fashion's biggest night

Stars set for Met Gala, fashion's biggest night

-

France launches one-euro university meals for all students

-

Mysterious world beyond Pluto may have an atmosphere: astronomers

Mysterious world beyond Pluto may have an atmosphere: astronomers

-

Energy crisis fuels calls to cut methane emissions

-

Hantavirus: spread by rodents, potentially fatal, with no specific cure

Hantavirus: spread by rodents, potentially fatal, with no specific cure

-

Musk vs OpenAI trial enters second week

-

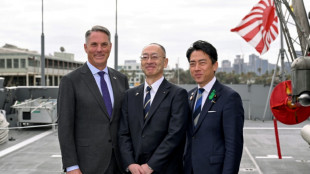

Japan PM says oil crisis has 'enormous impact' in Asia-Pacific

Japan PM says oil crisis has 'enormous impact' in Asia-Pacific

-

Seoul, Taipei hit records as Asian stocks track Wall St tech rally

-

Boeing faces civil trial over 737 MAX crash

Boeing faces civil trial over 737 MAX crash

-

Pacific Avenue Capital Partners Enters into Exclusive Negotiations to Acquire ESE World, Amcor's European Waste Container Business

-

Three die on Atlantic cruise ship from suspected hantavirus: WHO

Three die on Atlantic cruise ship from suspected hantavirus: WHO

-

Two die in 'respiratory illness' outbreak on Atlantic cruise ship

-

More Nepalis drive electric, evading global fuel shocks

More Nepalis drive electric, evading global fuel shocks

-

Latecomer Japan eyes slice of rising global defence spending

-

German fertiliser makers and farmers struggle with Iran war fallout

German fertiliser makers and farmers struggle with Iran war fallout

-

OPEC+ to make first post-UAE production decision

-

Massive crowds fill Rio's Copacabana beach for Shakira concert

Massive crowds fill Rio's Copacabana beach for Shakira concert

-

US airlines step up as Spirit winds down

-

Aviation companies step up as Spirit winds down

Aviation companies step up as Spirit winds down

-

'Bookless bookstore': audio-only book shop opens in New York

-

Venezuelan protesters call government wage hike a joke

Venezuelan protesters call government wage hike a joke

-

S&P 500, Nasdaq end at fresh records on tech earnings strength

-

Pope names former undocumented migrant as US bishop of West Virginia

Pope names former undocumented migrant as US bishop of West Virginia

-

Trump says will raise US tariffs on EU cars to 25%

-

ExxonMobil CEO sees chance of higher oil prices as earnings dip

ExxonMobil CEO sees chance of higher oil prices as earnings dip

-

After Madonna and Lady Gaga, Shakira set for Rio beach mega-gig

-

King Charles gets warm welcome in Bermuda after whirlwind US visit

King Charles gets warm welcome in Bermuda after whirlwind US visit

-

Coe hails IOC gender testing decision

-

Baguettes take centre stage on France's Labour Day

Baguettes take centre stage on France's Labour Day

-

Iran offers new proposal amid stalled US peace talks

-

French hub monitors Hormuz tensions from afar

French hub monitors Hormuz tensions from afar

-

Oil steady after wild swing, stocks diverge in thin trading

-

Chinese swimmer Sun Yang reports cyberbullying to police

Chinese swimmer Sun Yang reports cyberbullying to police

-

Iran activates air defences as Trump faces congressional deadline

-

India's cows offer biogas alternative to Mideast energy crunch

India's cows offer biogas alternative to Mideast energy crunch

-

Crude edges up after wild swing, stocks track Wall St rally

OpenAI forms AI safety committee after key departures

OpenAI, the company behind ChatGPT, announced the formation of a new safety committee on Tuesday, weeks after the departures of key executives raised questions about the firm's commitment to mitigating the dangers of artificial intelligence.

The company said the committee, which will include CEO Sam Altman, is being established as OpenAI begins training its next AI model, expected to surpass the capabilities of the GPT-4 system powering ChatGPT.

"While we are proud to build and release industry-leading models on both capabilities and safety, we welcome a robust debate at this important juncture," OpenAI stated.

Comprised of board members and executives, the committee will spend the next 90 days comprehensively evaluating and bolstering OpenAI's processes and safeguards around advanced AI development.

OpenAI stated it will also consult outside experts during this review period, including former US cybersecurity officials Rob Joyce, who previously led efforts at the National Security Agency, and John Carlin, a former senior Justice Department official.

Over the three-month span, the group will scrutinize OpenAI's current AI safety protocols and develop recommendations for potential enhancements or additions.

After this 90-day review, the committee's findings will be presented to the full OpenAI board before being publicly released.

The committee's formation comes on the heels of recent executive departures that stoked concerns about OpenAI's AI safety priorities.

Earlier this month, the company dissolved its "superalignment" team dedicated to mitigating long-term AI risks.

In announcing his exit, team co-lead Jan Leike criticized OpenAI for prioritizing "shiny new products" over vital safety work in a series of posts on X, the platform previously known as Twitter.

"Over the past few months, my team has been sailing against the wind," Leike said.

OpenAI has also faced controversy over an AI voice some claimed closely mimicked actress Scarlett Johansson, though the company denied attempting to impersonate the Hollywood star.

Y.Ibrahim--CPN