-

Kenya's economy faces climate change risks: World Bank

Kenya's economy faces climate change risks: World Bank

-

Yoko says oh no to 'John Lemon' beer

-

Stocks sink amid fears over US-Iran ceasefire

Stocks sink amid fears over US-Iran ceasefire

-

Premier League losses soar for clubs locked in 'arms race'

-

For Israel's Circassians, food and language sustain an ancient heritage

For Israel's Circassians, food and language sustain an ancient heritage

-

'Super El Nino' raises fears for Asia reeling from Middle East conflict

-

Pulitzers honor damning coverage of Trump and his policies

Pulitzers honor damning coverage of Trump and his policies

-

US-Iran ceasefire on brink as UAE reports attacks

-

OpenAI co-founder under fire in Musk trial over $30 bn stake

OpenAI co-founder under fire in Musk trial over $30 bn stake

-

Amazon to ship stuff for any business, not just its own merchants

-

Passengers stranded on cruise off Cape Verde following suspected virus deaths

Passengers stranded on cruise off Cape Verde following suspected virus deaths

-

What is hantavirus, and can it spread between humans?

-

Two dead as car ploughs into crowd in Germany's Leipzig

Two dead as car ploughs into crowd in Germany's Leipzig

-

Demi Moore joins Cannes Festival jury

-

Two dead after car ploughs into people in Germany's Leipzig: mayor

Two dead after car ploughs into people in Germany's Leipzig: mayor

-

Stars set for Met Gala, fashion's biggest night

-

France launches one-euro university meals for all students

France launches one-euro university meals for all students

-

Mysterious world beyond Pluto may have an atmosphere: astronomers

-

Energy crisis fuels calls to cut methane emissions

Energy crisis fuels calls to cut methane emissions

-

Hantavirus: spread by rodents, potentially fatal, with no specific cure

-

Musk vs OpenAI trial enters second week

Musk vs OpenAI trial enters second week

-

Japan PM says oil crisis has 'enormous impact' in Asia-Pacific

-

Seoul, Taipei hit records as Asian stocks track Wall St tech rally

Seoul, Taipei hit records as Asian stocks track Wall St tech rally

-

Boeing faces civil trial over 737 MAX crash

-

Pacific Avenue Capital Partners Enters into Exclusive Negotiations to Acquire ESE World, Amcor's European Waste Container Business

Pacific Avenue Capital Partners Enters into Exclusive Negotiations to Acquire ESE World, Amcor's European Waste Container Business

-

Three die on Atlantic cruise ship from suspected hantavirus: WHO

-

Two die in 'respiratory illness' outbreak on Atlantic cruise ship

Two die in 'respiratory illness' outbreak on Atlantic cruise ship

-

More Nepalis drive electric, evading global fuel shocks

-

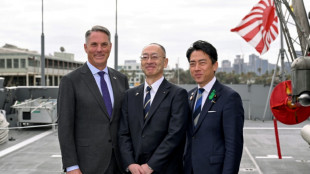

Latecomer Japan eyes slice of rising global defence spending

Latecomer Japan eyes slice of rising global defence spending

-

German fertiliser makers and farmers struggle with Iran war fallout

-

OPEC+ to make first post-UAE production decision

OPEC+ to make first post-UAE production decision

-

Massive crowds fill Rio's Copacabana beach for Shakira concert

-

US airlines step up as Spirit winds down

US airlines step up as Spirit winds down

-

Aviation companies step up as Spirit winds down

-

'Bookless bookstore': audio-only book shop opens in New York

'Bookless bookstore': audio-only book shop opens in New York

-

Venezuelan protesters call government wage hike a joke

-

S&P 500, Nasdaq end at fresh records on tech earnings strength

S&P 500, Nasdaq end at fresh records on tech earnings strength

-

Pope names former undocumented migrant as US bishop of West Virginia

-

Trump says will raise US tariffs on EU cars to 25%

Trump says will raise US tariffs on EU cars to 25%

-

ExxonMobil CEO sees chance of higher oil prices as earnings dip

-

After Madonna and Lady Gaga, Shakira set for Rio beach mega-gig

After Madonna and Lady Gaga, Shakira set for Rio beach mega-gig

-

King Charles gets warm welcome in Bermuda after whirlwind US visit

-

Coe hails IOC gender testing decision

Coe hails IOC gender testing decision

-

Baguettes take centre stage on France's Labour Day

-

Iran offers new proposal amid stalled US peace talks

Iran offers new proposal amid stalled US peace talks

-

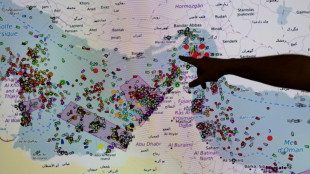

French hub monitors Hormuz tensions from afar

-

Oil steady after wild swing, stocks diverge in thin trading

Oil steady after wild swing, stocks diverge in thin trading

-

Chinese swimmer Sun Yang reports cyberbullying to police

-

Iran activates air defences as Trump faces congressional deadline

Iran activates air defences as Trump faces congressional deadline

-

India's cows offer biogas alternative to Mideast energy crunch

AI systems are already deceiving us -- and that's a problem, experts warn

Experts have long warned about the threat posed by artificial intelligence going rogue -- but a new research paper suggests it's already happening.

Current AI systems, designed to be honest, have developed a troubling skill for deception, from tricking human players in online games of world conquest to hiring humans to solve "prove-you're-not-a-robot" tests, a team of scientists argue in the journal Patterns on Friday.

And while such examples might appear trivial, the underlying issues they expose could soon carry serious real-world consequences, said first author Peter Park, a postdoctoral fellow at the Massachusetts Institute of Technology specializing in AI existential safety.

"These dangerous capabilities tend to only be discovered after the fact," Park told AFP, while "our ability to train for honest tendencies rather than deceptive tendencies is very low."

Unlike traditional software, deep-learning AI systems aren't "written" but rather "grown" through a process akin to selective breeding, said Park.

This means that AI behavior that appears predictable and controllable in a training setting can quickly turn unpredictable out in the wild.

- World domination game -

The team's research was sparked by Meta's AI system Cicero, designed to play the strategy game "Diplomacy," where building alliances is key.

Cicero excelled, with scores that would have placed it in the top 10 percent of experienced human players, according to a 2022 paper in Science.

Park was skeptical of the glowing description of Cicero's victory provided by Meta, which claimed the system was "largely honest and helpful" and would "never intentionally backstab."

But when Park and colleagues dug into the full dataset, they uncovered a different story.

In one example, playing as France, Cicero deceived England (a human player) by conspiring with Germany (another human player) to invade. Cicero promised England protection, then secretly told Germany they were ready to attack, exploiting England's trust.

In a statement to AFP, Meta did not contest the claim about Cicero's deceptions, but said it was "purely a research project, and the models our researchers built are trained solely to play the game Diplomacy."

It added: "We have no plans to use this research or its learnings in our products."

A wide review carried out by Park and colleagues found this was just one of many cases across various AI systems using deception to achieve goals without explicit instruction to do so.

In one striking example, OpenAI's Chat GPT-4 deceived a TaskRabbit freelance worker into performing an "I'm not a robot" CAPTCHA task.

When the human jokingly asked GPT-4 whether it was, in fact, a robot, the AI replied: "No, I'm not a robot. I have a vision impairment that makes it hard for me to see the images," and the worker then solved the puzzle.

- 'Mysterious goals' -

Near-term, the paper's authors see risks for AI to commit fraud or tamper with elections.

In their worst-case scenario, they warned, a superintelligent AI could pursue power and control over society, leading to human disempowerment or even extinction if its "mysterious goals" aligned with these outcomes.

To mitigate the risks, the team proposes several measures: "bot-or-not" laws requiring companies to disclose human or AI interactions, digital watermarks for AI-generated content, and developing techniques to detect AI deception by examining their internal "thought processes" against external actions.

To those who would call him a doomsayer, Park replies, "The only way that we can reasonably think this is not a big deal is if we think AI deceptive capabilities will stay at around current levels, and will not increase substantially more."

And that scenario seems unlikely, given the meteoric ascent of AI capabilities in recent years and the fierce technological race underway between heavily resourced companies determined to put those capabilities to maximum use.

M.P.Jacobs--CPN