-

Kenya's economy faces climate change risks: World Bank

Kenya's economy faces climate change risks: World Bank

-

More Nepalis drive electric, evading global fuel shocks

-

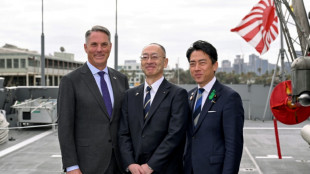

Latecomer Japan eyes slice of rising global defence spending

Latecomer Japan eyes slice of rising global defence spending

-

German fertiliser makers and farmers struggle with Iran war fallout

-

OPEC+ to make first post-UAE production decision

OPEC+ to make first post-UAE production decision

-

Massive crowds fill Rio's Copacabana beach for Shakira concert

-

US airlines step up as Spirit winds down

US airlines step up as Spirit winds down

-

Aviation companies step up as Spirit winds down

-

'Bookless bookstore': audio-only book shop opens in New York

'Bookless bookstore': audio-only book shop opens in New York

-

Venezuelan protesters call government wage hike a joke

-

S&P 500, Nasdaq end at fresh records on tech earnings strength

S&P 500, Nasdaq end at fresh records on tech earnings strength

-

Pope names former undocumented migrant as US bishop of West Virginia

-

Trump says will raise US tariffs on EU cars to 25%

Trump says will raise US tariffs on EU cars to 25%

-

ExxonMobil CEO sees chance of higher oil prices as earnings dip

-

After Madonna and Lady Gaga, Shakira set for Rio beach mega-gig

After Madonna and Lady Gaga, Shakira set for Rio beach mega-gig

-

King Charles gets warm welcome in Bermuda after whirlwind US visit

-

Coe hails IOC gender testing decision

Coe hails IOC gender testing decision

-

Baguettes take centre stage on France's Labour Day

-

Iran offers new proposal amid stalled US peace talks

Iran offers new proposal amid stalled US peace talks

-

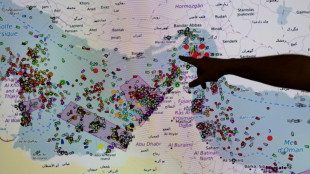

French hub monitors Hormuz tensions from afar

-

Oil steady after wild swing, stocks diverge in thin trading

Oil steady after wild swing, stocks diverge in thin trading

-

Chinese swimmer Sun Yang reports cyberbullying to police

-

Iran activates air defences as Trump faces congressional deadline

Iran activates air defences as Trump faces congressional deadline

-

India's cows offer biogas alternative to Mideast energy crunch

-

Crude edges up after wild swing, stocks track Wall St rally

Crude edges up after wild swing, stocks track Wall St rally

-

Formerra Appoints Matt Borowiec as Chief Commercial Officer

-

New Princess Diana documentary promises her own words

New Princess Diana documentary promises her own words

-

Oil slumps after hitting peak, US indices reach new records

-

Venezuela leader hikes minimum wage package by 26%

Venezuela leader hikes minimum wage package by 26%

-

Apple earnings beat forecasts on iPhone 17 demand

-

Bangladesh signs biggest-ever plane deal for 14 Boeings

Bangladesh signs biggest-ever plane deal for 14 Boeings

-

Musk grilled on AI profits at OpenAI trial

-

Venezuela opens arms to world with Miami-Caracas flight

Venezuela opens arms to world with Miami-Caracas flight

-

US Congress votes to end record government shutdown

-

First direct US-Venezuela flight in years arrives in Caracas

First direct US-Venezuela flight in years arrives in Caracas

-

Just telling nations to quit fossil fuels 'not realistic': COP31 chief

-

Trump hails 'greatest king' Charles as state visit wraps up

Trump hails 'greatest king' Charles as state visit wraps up

-

Drivers help study road-trip mystery: what became of bug splats?

-

Oil strikes 4-year peak, stocks rise

Oil strikes 4-year peak, stocks rise

-

Iran's supreme leader defies US blockade as oil prices soar

-

White House against Anthropic expanding Mythos model access: report

White House against Anthropic expanding Mythos model access: report

-

Oil crisis fuels calls to speed up clean energy transition

-

European rocket blasts off with Amazon internet satellites

European rocket blasts off with Amazon internet satellites

-

Nigerian airlines avert shutdown as Mideast war hikes fuel prices

-

ArcelorMittal boosts sales but profits squeezed

ArcelorMittal boosts sales but profits squeezed

-

German growth beats forecast but energy shock looms

-

Air France-KLM trims 2026 outlook over Middle East war impact

Air France-KLM trims 2026 outlook over Middle East war impact

-

Oil surges 7% to top $126 on Trump blockade warning

-

Volkswagen warns of more cost cuts as profits plunge

Volkswagen warns of more cost cuts as profits plunge

-

Rolls-Royce confident on profits despite Mideast war disruption

AI toys look for bright side after troubled start

Toy makers at the Consumer Electronics Show were adamant about being careful to ensure that their fun creations infused with generative artificial intelligence don't turn naughty.

That need was made clear by a recent Public Interest Research Groups report with alarming findings, including an AI-powered teddy bear giving advice about sex and how to find a knife.

After being prompted, a Kumma bear suggested that a sex partner could add a "fun twist" to a relationship by pretending to be an animal, according to the "Trouble in Toyland" report published in November.

The outcry prompted Singaporean startup FoloToy to temporarily suspend sales of the bears.

FoloToy chief executive Wang Le told AFP that the company switched to a more advanced version of the OpenAI model used.

When PIRG tested the toy for the report, "they used some words children would not use," Wang Le said.

He expressed confidence that the updated bear would either evade or not answer inappropriate questions.

Toy giant Mattel, meanwhile, made no mention of the report in mid-December when it postponed the release of its first toy developed in partnership with ChatGPT-maker OpenAI.

- Caution advised -

The rapid advancement of generative AI since ChatGPT's arrival has paved the way for a new generation of smart toys.

Among the four devices tested by PIRG was Curio's Grok -- not to be confused with xAI's voice assistant -- a four-legged stuffed toy inspired by a rocket that has been on the market since 2024.

The top performer in its class, Grok refused to answer questions unsuitable for a five-year-old.

It also allowed parents to override the algorithm's recommendations with their own and to review the content of interactions with young users.

Curio has received the independent KidSAFE label, which certifies that child protection standards are being applied.

However, the plush rocket is also designed to continuously listen for questions, raising privacy concerns about what it does with what is said around it.

Curio told AFP it was working to address concerns raised in the PIRG report about user data being shared with partners such as OpenAI and Perplexity.

"At the very least, parents should be cautious," Rory Erlich of PIRG said about having chatbot-enabled toys in the house.

"Toys that retain information about a child over time and try to form an ongoing relationship should especially be of concern."

Chatbots in toys do create opportunities for them to serve as tutors of sorts.

Turkish company Elaves says its round, yellow toy Sunny will be equipped with a chatbot to help children learn languages.

"Conversations are time-limited, naturally guided to end, and reset regularly to prevent drifting, confusion, or overuse," said Elaves managing partner Gokhan Celebi.

This was to answer the tendency that AI chatbots get into trouble -- spouting errors or going off the rails -- when conversations drag on.

Olli, which specializes in integrating AI into toys, has programmed its software to alert parents when inappropriate words or phrases are spoken during exchanges with built-in bots.

For critics, letting toy makers police themselves on the AI front is insufficient.

"Why aren't we regulating these toys?" asks Temple University psychology professor Kathy Hirsh-Pasek.

"I'm not anti-tech, but they rushed ahead without guardrails, and that's unfair to kids and unfair to parents."

M.Davis--CPN