-

Kenya's economy faces climate change risks: World Bank

Kenya's economy faces climate change risks: World Bank

-

Three die on Atlantic cruise ship from suspected hantavirus: WHO

-

Two die in 'respiratory illness' outbreak on Atlantic cruise ship

Two die in 'respiratory illness' outbreak on Atlantic cruise ship

-

More Nepalis drive electric, evading global fuel shocks

-

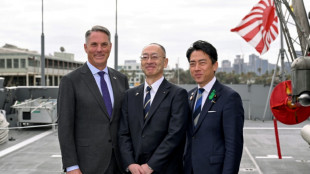

Latecomer Japan eyes slice of rising global defence spending

Latecomer Japan eyes slice of rising global defence spending

-

German fertiliser makers and farmers struggle with Iran war fallout

-

OPEC+ to make first post-UAE production decision

OPEC+ to make first post-UAE production decision

-

Massive crowds fill Rio's Copacabana beach for Shakira concert

-

US airlines step up as Spirit winds down

US airlines step up as Spirit winds down

-

Aviation companies step up as Spirit winds down

-

'Bookless bookstore': audio-only book shop opens in New York

'Bookless bookstore': audio-only book shop opens in New York

-

Venezuelan protesters call government wage hike a joke

-

S&P 500, Nasdaq end at fresh records on tech earnings strength

S&P 500, Nasdaq end at fresh records on tech earnings strength

-

Pope names former undocumented migrant as US bishop of West Virginia

-

Trump says will raise US tariffs on EU cars to 25%

Trump says will raise US tariffs on EU cars to 25%

-

ExxonMobil CEO sees chance of higher oil prices as earnings dip

-

After Madonna and Lady Gaga, Shakira set for Rio beach mega-gig

After Madonna and Lady Gaga, Shakira set for Rio beach mega-gig

-

King Charles gets warm welcome in Bermuda after whirlwind US visit

-

Coe hails IOC gender testing decision

Coe hails IOC gender testing decision

-

Baguettes take centre stage on France's Labour Day

-

Iran offers new proposal amid stalled US peace talks

Iran offers new proposal amid stalled US peace talks

-

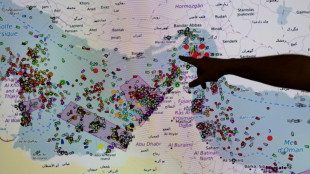

French hub monitors Hormuz tensions from afar

-

Oil steady after wild swing, stocks diverge in thin trading

Oil steady after wild swing, stocks diverge in thin trading

-

Chinese swimmer Sun Yang reports cyberbullying to police

-

Iran activates air defences as Trump faces congressional deadline

Iran activates air defences as Trump faces congressional deadline

-

India's cows offer biogas alternative to Mideast energy crunch

-

Crude edges up after wild swing, stocks track Wall St rally

Crude edges up after wild swing, stocks track Wall St rally

-

Formerra Appoints Matt Borowiec as Chief Commercial Officer

-

New Princess Diana documentary promises her own words

New Princess Diana documentary promises her own words

-

Oil slumps after hitting peak, US indices reach new records

-

Venezuela leader hikes minimum wage package by 26%

Venezuela leader hikes minimum wage package by 26%

-

Apple earnings beat forecasts on iPhone 17 demand

-

Bangladesh signs biggest-ever plane deal for 14 Boeings

Bangladesh signs biggest-ever plane deal for 14 Boeings

-

Musk grilled on AI profits at OpenAI trial

-

Venezuela opens arms to world with Miami-Caracas flight

Venezuela opens arms to world with Miami-Caracas flight

-

US Congress votes to end record government shutdown

-

First direct US-Venezuela flight in years arrives in Caracas

First direct US-Venezuela flight in years arrives in Caracas

-

Just telling nations to quit fossil fuels 'not realistic': COP31 chief

-

Trump hails 'greatest king' Charles as state visit wraps up

Trump hails 'greatest king' Charles as state visit wraps up

-

Drivers help study road-trip mystery: what became of bug splats?

-

Oil strikes 4-year peak, stocks rise

Oil strikes 4-year peak, stocks rise

-

Iran's supreme leader defies US blockade as oil prices soar

-

White House against Anthropic expanding Mythos model access: report

White House against Anthropic expanding Mythos model access: report

-

Oil crisis fuels calls to speed up clean energy transition

-

European rocket blasts off with Amazon internet satellites

European rocket blasts off with Amazon internet satellites

-

Nigerian airlines avert shutdown as Mideast war hikes fuel prices

-

ArcelorMittal boosts sales but profits squeezed

ArcelorMittal boosts sales but profits squeezed

-

German growth beats forecast but energy shock looms

-

Air France-KLM trims 2026 outlook over Middle East war impact

Air France-KLM trims 2026 outlook over Middle East war impact

-

Oil surges 7% to top $126 on Trump blockade warning

California enacts AI safety law targeting tech giants

California Governor Gavin Newsom has signed into law groundbreaking legislation requiring the world's largest artificial intelligence companies to publicly disclose their safety protocols and report critical incidents, state lawmakers announced Monday.

Senate Bill 53 marks California's most significant move yet to regulate Silicon Valley's rapidly advancing AI industry while also maintaining its position as a global tech hub.

"With a technology as transformative as AI, we have a responsibility to support that innovation while putting in place commonsense guardrails," State Senator Scott Wiener, the bill's sponsor, said in a statement.

The new law represents a successful second attempt by Wiener to establish AI safety regulations after Newsom vetoed his previous bill, SB 1047, after furious pushback from the tech industry.

It also comes after a failed attempt by the Trump administration to prevent states from enacting AI regulations, under the argument that they would create regulatory chaos and slow US-made innovation in a race with China.

The new law says major AI companies have to publicly disclose their safety and security protocols in redacted form to protect intellectual property.

They must also report critical safety incidents -- including model-enabled weapons threats, major cyber-attacks, or loss of model control -- within 15 days to state officials.

The legislation also establishes whistleblower protections for employees who reveal evidence of dangers or violations.

According to Wiener, California's approach differs from the European Union's landmark AI Act, which requires private disclosures to government agencies.

SB 53, meanwhile, mandates public disclosure to ensure greater accountability.

In what advocates describe as a world-first provision, the law requires companies to report instances where AI systems engage in dangerous deceptive behavior during testing.

For example, if an AI system lies about the effectiveness of controls designed to prevent it from assisting in bioweapon construction, developers must disclose the incident if it materially increases catastrophic harm risks.

The working group behind the law was led by prominent experts including Stanford University's Fei-Fei Li, known as the "godmother of AI."

S.F.Lacroix--CPN