-

Kenya's economy faces climate change risks: World Bank

Kenya's economy faces climate change risks: World Bank

-

OpenAI co-founder under fire in Musk trial over $30 bn stake

-

Amazon to ship stuff for any business, not just its own merchants

Amazon to ship stuff for any business, not just its own merchants

-

Passengers stranded on cruise off Cape Verde following suspected virus deaths

-

What is hantavirus, and can it spread between humans?

What is hantavirus, and can it spread between humans?

-

Two dead as car ploughs into crowd in Germany's Leipzig

-

Demi Moore joins Cannes Festival jury

Demi Moore joins Cannes Festival jury

-

Two dead after car ploughs into people in Germany's Leipzig: mayor

-

Stars set for Met Gala, fashion's biggest night

Stars set for Met Gala, fashion's biggest night

-

France launches one-euro university meals for all students

-

Mysterious world beyond Pluto may have an atmosphere: astronomers

Mysterious world beyond Pluto may have an atmosphere: astronomers

-

Energy crisis fuels calls to cut methane emissions

-

Hantavirus: spread by rodents, potentially fatal, with no specific cure

Hantavirus: spread by rodents, potentially fatal, with no specific cure

-

Musk vs OpenAI trial enters second week

-

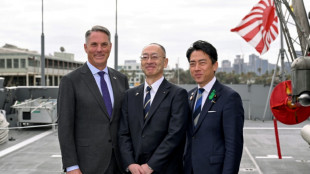

Japan PM says oil crisis has 'enormous impact' in Asia-Pacific

Japan PM says oil crisis has 'enormous impact' in Asia-Pacific

-

Seoul, Taipei hit records as Asian stocks track Wall St tech rally

-

Boeing faces civil trial over 737 MAX crash

Boeing faces civil trial over 737 MAX crash

-

Pacific Avenue Capital Partners Enters into Exclusive Negotiations to Acquire ESE World, Amcor's European Waste Container Business

-

Three die on Atlantic cruise ship from suspected hantavirus: WHO

Three die on Atlantic cruise ship from suspected hantavirus: WHO

-

Two die in 'respiratory illness' outbreak on Atlantic cruise ship

-

More Nepalis drive electric, evading global fuel shocks

More Nepalis drive electric, evading global fuel shocks

-

Latecomer Japan eyes slice of rising global defence spending

-

German fertiliser makers and farmers struggle with Iran war fallout

German fertiliser makers and farmers struggle with Iran war fallout

-

OPEC+ to make first post-UAE production decision

-

Massive crowds fill Rio's Copacabana beach for Shakira concert

Massive crowds fill Rio's Copacabana beach for Shakira concert

-

US airlines step up as Spirit winds down

-

Aviation companies step up as Spirit winds down

Aviation companies step up as Spirit winds down

-

'Bookless bookstore': audio-only book shop opens in New York

-

Venezuelan protesters call government wage hike a joke

Venezuelan protesters call government wage hike a joke

-

S&P 500, Nasdaq end at fresh records on tech earnings strength

-

Pope names former undocumented migrant as US bishop of West Virginia

Pope names former undocumented migrant as US bishop of West Virginia

-

Trump says will raise US tariffs on EU cars to 25%

-

ExxonMobil CEO sees chance of higher oil prices as earnings dip

ExxonMobil CEO sees chance of higher oil prices as earnings dip

-

After Madonna and Lady Gaga, Shakira set for Rio beach mega-gig

-

King Charles gets warm welcome in Bermuda after whirlwind US visit

King Charles gets warm welcome in Bermuda after whirlwind US visit

-

Coe hails IOC gender testing decision

-

Baguettes take centre stage on France's Labour Day

Baguettes take centre stage on France's Labour Day

-

Iran offers new proposal amid stalled US peace talks

-

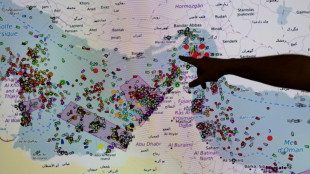

French hub monitors Hormuz tensions from afar

French hub monitors Hormuz tensions from afar

-

Oil steady after wild swing, stocks diverge in thin trading

-

Chinese swimmer Sun Yang reports cyberbullying to police

Chinese swimmer Sun Yang reports cyberbullying to police

-

Iran activates air defences as Trump faces congressional deadline

-

India's cows offer biogas alternative to Mideast energy crunch

India's cows offer biogas alternative to Mideast energy crunch

-

Crude edges up after wild swing, stocks track Wall St rally

-

Formerra Appoints Matt Borowiec as Chief Commercial Officer

Formerra Appoints Matt Borowiec as Chief Commercial Officer

-

New Princess Diana documentary promises her own words

-

Oil slumps after hitting peak, US indices reach new records

Oil slumps after hitting peak, US indices reach new records

-

Venezuela leader hikes minimum wage package by 26%

-

Apple earnings beat forecasts on iPhone 17 demand

Apple earnings beat forecasts on iPhone 17 demand

-

Bangladesh signs biggest-ever plane deal for 14 Boeings

OpenAI releases reasoning AI with eye on safety, accuracy

ChatGPT creator OpenAI on Thursday released a new series of artificial intelligence models designed to spend more time thinking -- in hopes that generative AI chatbots provide more accurate and beneficial responses.

The new models, known as OpenAI o1-Preview, are designed to tackle complex tasks and solve more challenging problems in science, coding and mathematics -- something that earlier models have been criticized for failing to provide consistently.

Unlike their predecessors, these models have been trained to refine their thinking processes, try different methods and recognize mistakes before they deploy a final answer.

The new release comes as OpenAI is raising funds that could see it valued around $150 billion, which would make it one of the world's most valuable private companies, according to US media.

Investors include Microsoft and Nvidia, and could also include a $7 billion investment from MGX, a United Arab Emirates-backed investment fund, The Information reported.

OpenAI CEO Sam Altman hailed the models as "a new paradigm: AI that can do general-purpose complex reasoning."

However, he cautioned that the technology "is still flawed, still limited, and it still seems more impressive on first use than it does after you spend more time with it."

OpenAI's push to improve "thinking" in its model is a response to the persistent problem of "hallucinations" in AI chatbots.

This refers to their tendency to generate persuasive but incorrect content that has somewhat cooled the excitement over ChatGPT-style AI features among business customers

"We have noticed that this model hallucinates less," OpenAI researcher Jerry Tworek told The Verge.

But "we can't say we solved hallucinations," he added.

The Microsoft-backed company said that in tests, the models performed comparably to PhD students on difficult tasks in physics, chemistry and biology.

They also excelled in mathematics and coding, achieving an 83 percent success rate on a qualifying exam for the International Mathematics Olympiad, compared to 13 percent for GPT-4o, its most advanced general use model.

OpenAI said that the new reasoning capabilities could be used for healthcare researchers to annotate cell sequencing data, physicists to generate complex formulas, or computer developers to build and execute multistep designs.

The company also said that the models survived rigorous jailbreaking tests and could better withstand attempts to circumvent its guardrails.

OpenAI said its strengthened safety measures also included recent agreements with the US and UK AI Safety Institutes, which were granted early access to the models for evaluation and testing.

X.Cheung--CPN