-

Kenya's economy faces climate change risks: World Bank

Kenya's economy faces climate change risks: World Bank

-

Pulitzers honor damning coverage of Trump and his policies

-

US-Iran ceasefire on brink as UAE reports attacks

US-Iran ceasefire on brink as UAE reports attacks

-

OpenAI co-founder under fire in Musk trial over $30 bn stake

-

Amazon to ship stuff for any business, not just its own merchants

Amazon to ship stuff for any business, not just its own merchants

-

Passengers stranded on cruise off Cape Verde following suspected virus deaths

-

What is hantavirus, and can it spread between humans?

What is hantavirus, and can it spread between humans?

-

Two dead as car ploughs into crowd in Germany's Leipzig

-

Demi Moore joins Cannes Festival jury

Demi Moore joins Cannes Festival jury

-

Two dead after car ploughs into people in Germany's Leipzig: mayor

-

Stars set for Met Gala, fashion's biggest night

Stars set for Met Gala, fashion's biggest night

-

France launches one-euro university meals for all students

-

Mysterious world beyond Pluto may have an atmosphere: astronomers

Mysterious world beyond Pluto may have an atmosphere: astronomers

-

Energy crisis fuels calls to cut methane emissions

-

Hantavirus: spread by rodents, potentially fatal, with no specific cure

Hantavirus: spread by rodents, potentially fatal, with no specific cure

-

Musk vs OpenAI trial enters second week

-

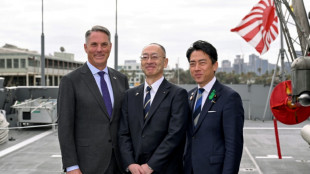

Japan PM says oil crisis has 'enormous impact' in Asia-Pacific

Japan PM says oil crisis has 'enormous impact' in Asia-Pacific

-

Seoul, Taipei hit records as Asian stocks track Wall St tech rally

-

Boeing faces civil trial over 737 MAX crash

Boeing faces civil trial over 737 MAX crash

-

Pacific Avenue Capital Partners Enters into Exclusive Negotiations to Acquire ESE World, Amcor's European Waste Container Business

-

Three die on Atlantic cruise ship from suspected hantavirus: WHO

Three die on Atlantic cruise ship from suspected hantavirus: WHO

-

Two die in 'respiratory illness' outbreak on Atlantic cruise ship

-

More Nepalis drive electric, evading global fuel shocks

More Nepalis drive electric, evading global fuel shocks

-

Latecomer Japan eyes slice of rising global defence spending

-

German fertiliser makers and farmers struggle with Iran war fallout

German fertiliser makers and farmers struggle with Iran war fallout

-

OPEC+ to make first post-UAE production decision

-

Massive crowds fill Rio's Copacabana beach for Shakira concert

Massive crowds fill Rio's Copacabana beach for Shakira concert

-

US airlines step up as Spirit winds down

-

Aviation companies step up as Spirit winds down

Aviation companies step up as Spirit winds down

-

'Bookless bookstore': audio-only book shop opens in New York

-

Venezuelan protesters call government wage hike a joke

Venezuelan protesters call government wage hike a joke

-

S&P 500, Nasdaq end at fresh records on tech earnings strength

-

Pope names former undocumented migrant as US bishop of West Virginia

Pope names former undocumented migrant as US bishop of West Virginia

-

Trump says will raise US tariffs on EU cars to 25%

-

ExxonMobil CEO sees chance of higher oil prices as earnings dip

ExxonMobil CEO sees chance of higher oil prices as earnings dip

-

After Madonna and Lady Gaga, Shakira set for Rio beach mega-gig

-

King Charles gets warm welcome in Bermuda after whirlwind US visit

King Charles gets warm welcome in Bermuda after whirlwind US visit

-

Coe hails IOC gender testing decision

-

Baguettes take centre stage on France's Labour Day

Baguettes take centre stage on France's Labour Day

-

Iran offers new proposal amid stalled US peace talks

-

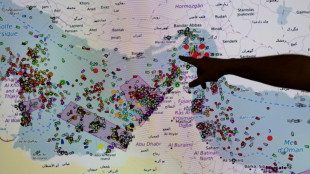

French hub monitors Hormuz tensions from afar

French hub monitors Hormuz tensions from afar

-

Oil steady after wild swing, stocks diverge in thin trading

-

Chinese swimmer Sun Yang reports cyberbullying to police

Chinese swimmer Sun Yang reports cyberbullying to police

-

Iran activates air defences as Trump faces congressional deadline

-

India's cows offer biogas alternative to Mideast energy crunch

India's cows offer biogas alternative to Mideast energy crunch

-

Crude edges up after wild swing, stocks track Wall St rally

-

Formerra Appoints Matt Borowiec as Chief Commercial Officer

Formerra Appoints Matt Borowiec as Chief Commercial Officer

-

New Princess Diana documentary promises her own words

-

Oil slumps after hitting peak, US indices reach new records

Oil slumps after hitting peak, US indices reach new records

-

Venezuela leader hikes minimum wage package by 26%

Inbred, gibberish or just MAD? Warnings rise about AI models

When academic Jathan Sadowski reached for an analogy last year to describe how AI programs decay, he landed on the term "Habsburg AI".

The Habsburgs were one of Europe's most powerful royal houses, but entire sections of their family line collapsed after centuries of inbreeding.

Recent studies have shown how AI programs underpinning products like ChatGPT go through a similar collapse when they are repeatedly fed their own data.

"I think the term Habsburg AI has aged very well," Sadowski told AFP, saying his coinage had "only become more relevant for how we think about AI systems".

The ultimate concern is that AI-generated content could take over the web, which could in turn render chatbots and image generators useless and throw a trillion-dollar industry into a tailspin.

But other experts argue that the problem is overstated, or can be fixed.

And many companies are enthusiastic about using what they call synthetic data to train AI programs. This artificially generated data is used to augment or replace real-world data. It is cheaper than human-created content but more predictable.

"The open question for researchers and companies building AI systems is: how much synthetic data is too much," said Sadowski, lecturer in emerging technologies at Australia's Monash University.

- 'Mad cow disease' -

Training AI programs, known in the industry as large language models (LLMs), involves scraping vast quantities of text or images from the internet.

This information is broken into trillions of tiny machine-readable chunks, known as tokens.

When asked a question, a program like ChatGPT selects and assembles tokens in a way that its training data tells it is the most likely sequence to fit with the query.

But even the best AI tools generate falsehoods and nonsense, and critics have long expressed concern about what would happen if a model was fed on its own outputs.

In late July, a paper in the journal Nature titled "AI models collapse when trained on recursively generated data" proved a lightning rod for discussion.

The authors described how models quickly discarded rarer elements in their original dataset and, as Nature reported, outputs degenerated into "gibberish".

A week later, researchers from Rice and Stanford universities published a paper titled "Self-consuming generative models go MAD" that reached a similar conclusion.

They tested image-generating AI programs and showed that outputs become more generic and strafed with undesirable elements as they added AI-generated data to the underlying model.

They labelled model collapse "Model Autophagy Disorder" (MAD) and compared it to mad cow disease, a fatal illness caused by feeding the remnants of dead cows to other cows.

- 'Doomsday scenario' -

These researchers worry that AI-generated text, images and video are clearing the web of usable human-made data.

"One doomsday scenario is that if left uncontrolled for many generations, MAD could poison the data quality and diversity of the entire internet," one of the Rice University authors, Richard Baraniuk, said in a statement.

However, industry figures are unfazed.

Anthropic and Hugging Face, two leaders in the field who pride themselves on taking an ethical approach to the technology, both told AFP they used AI-generated data to fine-tune or filter their datasets.

Anton Lozhkov, machine learning engineer at Hugging Face, said the Nature paper gave an interesting theoretical perspective but its disaster scenario was not realistic.

"Training on multiple rounds of synthetic data is simply not done in reality," he said.

However, he said researchers were just as frustrated as everyone else with the state of the internet.

"A large part of the internet is trash," he said, adding that Hugging Face already made huge efforts to clean data -- sometimes jettisoning as much as 90 percent.

He hoped that web users would help clear up the internet by simply not engaging with generated content.

"I strongly believe that humans will see the effects and catch generated data way before models will," he said.

M.Anderson--CPN