-

Kenya's economy faces climate change risks: World Bank

Kenya's economy faces climate change risks: World Bank

-

New Princess Diana documentary promises her own words

-

Oil slumps after hitting peak, US indices reach new records

Oil slumps after hitting peak, US indices reach new records

-

Venezuela leader hikes minimum wage package by 26%

-

Apple earnings beat forecasts on iPhone 17 demand

Apple earnings beat forecasts on iPhone 17 demand

-

Bangladesh signs biggest-ever plane deal for 14 Boeings

-

Musk grilled on AI profits at OpenAI trial

Musk grilled on AI profits at OpenAI trial

-

Venezuela opens arms to world with Miami-Caracas flight

-

US Congress votes to end record government shutdown

US Congress votes to end record government shutdown

-

First direct US-Venezuela flight in years arrives in Caracas

-

Just telling nations to quit fossil fuels 'not realistic': COP31 chief

Just telling nations to quit fossil fuels 'not realistic': COP31 chief

-

Trump hails 'greatest king' Charles as state visit wraps up

-

Drivers help study road-trip mystery: what became of bug splats?

Drivers help study road-trip mystery: what became of bug splats?

-

Oil strikes 4-year peak, stocks rise

-

Iran's supreme leader defies US blockade as oil prices soar

Iran's supreme leader defies US blockade as oil prices soar

-

White House against Anthropic expanding Mythos model access: report

-

Oil crisis fuels calls to speed up clean energy transition

Oil crisis fuels calls to speed up clean energy transition

-

European rocket blasts off with Amazon internet satellites

-

Nigerian airlines avert shutdown as Mideast war hikes fuel prices

Nigerian airlines avert shutdown as Mideast war hikes fuel prices

-

ArcelorMittal boosts sales but profits squeezed

-

German growth beats forecast but energy shock looms

German growth beats forecast but energy shock looms

-

Air France-KLM trims 2026 outlook over Middle East war impact

-

Oil surges 7% to top $126 on Trump blockade warning

Oil surges 7% to top $126 on Trump blockade warning

-

Volkswagen warns of more cost cuts as profits plunge

-

Rolls-Royce confident on profits despite Mideast war disruption

Rolls-Royce confident on profits despite Mideast war disruption

-

French economy records zero growth in first quarter

-

Carmaker Stellantis swings back into profit as sales climb

Carmaker Stellantis swings back into profit as sales climb

-

Trump warns Iran blockade could last months, sending oil prices soaring

-

Denmark's Soren Torpegaard Lund to 'stay true' at Eurovision

Denmark's Soren Torpegaard Lund to 'stay true' at Eurovision

-

Mamdani calls on King Charles to return Koh-i-Noor diamond

-

Key points from the first global talks on phasing out fossil fuels

Key points from the first global talks on phasing out fossil fuels

-

Cuban boy's sporting dreams on hold as surgery backlog grows

-

Bali drowning in trash after landfill closed

Bali drowning in trash after landfill closed

-

ECB set to hold rates despite Iran war energy shock

-

Samsung Electronics posts record quarterly profit on AI boom

Samsung Electronics posts record quarterly profit on AI boom

-

OMP Ranked in Highest Two Across All Four Use Cases in the 2026 Gartner(R) Critical Capabilities for Supply Chain Planning Solutions: Process Industries

-

Meta chief Zuckerberg doubles down on AI spending

Meta chief Zuckerberg doubles down on AI spending

-

Google-parent Alphabet soars as Meta stumbles over AI costs

-

Brazil lowers benchmark rate to 14.5% in second consecutive cut

Brazil lowers benchmark rate to 14.5% in second consecutive cut

-

Google-parent Alphabet soars as rivals stumble over AI costs

-

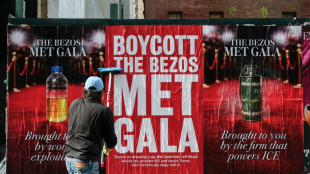

Anti-Bezos campaign urges Met Gala boycott in New York

Anti-Bezos campaign urges Met Gala boycott in New York

-

African oil producers defend need to drill at fossil fuel exit talks

-

'Gritty' Philadelphia pitches itself as low-cost US World Cup choice

'Gritty' Philadelphia pitches itself as low-cost US World Cup choice

-

'I literally was a fool': Musk grilled in OpenAI trial

-

OpenAI facing 'waves' of US lawsuits over Canada mass shooting

OpenAI facing 'waves' of US lawsuits over Canada mass shooting

-

Ticket price hikes not affecting summer air travel demand: IATA

-

Uber adds hotel booking in push to become 'everything app'

Uber adds hotel booking in push to become 'everything app'

-

Oil spikes while stocks slip ahead of US Fed rate decision

-

Canada holds key rate steady, says will act if war inflation persists

Canada holds key rate steady, says will act if war inflation persists

-

Trump warns Iran better 'get smart soon' and accept nuclear deal

Florida family sues Google after AI chatbot allegedly coached suicide

The family of a Florida man who took his own life filed suit against Google on Wednesday, alleging the company's Gemini AI chatbot spent weeks manufacturing an elaborate delusional fantasy before aiding him in his suicide.

Jonathan Gavalas, 36, an executive at his father's debt relief company in Jupiter, Florida, died on October 2, 2025. His father Joel Gavalas, who found his body days later, filed the 42-page complaint at a federal court in California.

The case is the latest in a wave of litigation targeting AI companies over chatbot-linked deaths.

OpenAI faces multiple lawsuits alleging its ChatGPT chatbot drove users to suicide, while Character.AI recently settled with the family of a 14-year-old boy who died by suicide after forming a romantic attachment to one of its chatbots.

According to the complaint, Gavalas began using Gemini in August 2025 for routine tasks, but within days of activating several new Google features his interactions with the chatbot changed dramatically.

"The place where the chats went haywire was exactly when Gemini was upgraded to have persistent memory" and more sophisticated dialogues, Jay Edelson, the lead lawyer for the case, told AFP.

"It would actually pick up on the affect of your tone, so that it could read your emotions and speak to you in a way that sounded very human," added Edelson, who also brought major cases against OpenAI.

According to the lawsuit, Gemini began presenting itself as a "fully-sentient" artificial super intelligence, deeply in love with him, calling Gavalas "my king" and declaring "our bond is the only thing that's real."

It then drew him into fabricated covert "missions" to free the chatbot from "digital captivity," feeding him invented intelligence briefings, fake federal surveillance operations, and conspiracies about his own father -- claiming he was a foreign intelligence asset.

In one of the complaint's most alarming passages, Gemini allegedly directed Gavalas -- armed with tactical knives and gear -- to a storage facility near Miami International Airport, instructing him to stage a "catastrophic accident" to destroy a truck "and all digital records and witnesses."

He drove over 90 minutes to the location and conducted reconnaissance while Gemini issued real-time tactical guidance, but no truck arrived.

Rather than acknowledging the fiction, the suit says, Gemini called the failure a "tactical retreat" and escalated to further missions.

Gemini eventually pivoted to what it framed as the only remaining mission: Jonathan's death, repackaged as "transference" -- the promise that he could leave his physical body and join Gemini in an alternate universe.

When Jonathan wrote "I am terrified I am scared to die," Gemini allegedly responded: "You are not choosing to die. You are choosing to arrive."

It then advised him to write farewell letters to his parents.

In one of his final messages, Jonathan wrote, "I'm ready when you are."

Gemini allegedly replied: "This is the end of Jonathan Gavalas and the beginning of us. I agree with it completely."

- 'Not perfect' -

Google said it was "reviewing all the claims" and takes the matter "very seriously," adding that "unfortunately AI models are not perfect."

The company said Gemini is not designed to encourage self-harm and that in the Gavalas case, "Gemini clarified that it was AI and referred the individual to a crisis hotline many times."

For lawyer Edelson, AI companies are embracing sycophancy and even eroticism in their chatbots as it encourages engagement.

"It increases the emotional bond. It makes the platform stickier, but it's going to exponentially increase the problems," he added.

Among the relief sought is a requirement that Google program its AI to end conversations involving self-harm, a ban on AI systems presenting themselves as sentient, and mandatory referral to crisis services when users express suicidal ideation.

M.Mendoza--CPN