-

Kenya's economy faces climate change risks: World Bank

Kenya's economy faces climate change risks: World Bank

-

Boycott-hit 70th Eurovision celebrated under high security

-

Teen shooter kills two at Brazil school

Teen shooter kills two at Brazil school

-

Fresh UAE attacks blamed on Iran draw new reality in the Gulf

-

US declares Iran offensive over, warns force remains an option

US declares Iran offensive over, warns force remains an option

-

Mexican BTS fans go wild as concerts grow near

-

Europe's first commercial robotaxi service rolls out in Croatia

Europe's first commercial robotaxi service rolls out in Croatia

-

Suspected hantavirus cases to be evacuated from cruise ship

-

Rolling Stones announce July 10 release of new album 'Foreign Tongues'

Rolling Stones announce July 10 release of new album 'Foreign Tongues'

-

EU urges US to stick to tariff deal terms

-

Stocks rise, oil falls as traders eye earnings, US-Iran ceasefire

Stocks rise, oil falls as traders eye earnings, US-Iran ceasefire

-

Colombian mine explosion kills nine

-

Vodafone to take full ownership of UK mobile operator

Vodafone to take full ownership of UK mobile operator

-

US trade gap widens in March as AI spending boosts imports

-

Pyongyang calling: North Korea shows off own-brand phones

Pyongyang calling: North Korea shows off own-brand phones

-

Iran warns 'not even started' in Hormuz

-

Yoko says oh no to 'John Lemon' beer

Yoko says oh no to 'John Lemon' beer

-

Stocks sink amid fears over US-Iran ceasefire

-

Premier League losses soar for clubs locked in 'arms race'

Premier League losses soar for clubs locked in 'arms race'

-

For Israel's Circassians, food and language sustain an ancient heritage

-

'Super El Nino' raises fears for Asia reeling from Middle East conflict

'Super El Nino' raises fears for Asia reeling from Middle East conflict

-

Pulitzers honor damning coverage of Trump and his policies

-

Digi Power X Signs AI Colocation Agreement with Leading AI Compute Company for 40 MW Data Center in Columbiana, Alabama

Digi Power X Signs AI Colocation Agreement with Leading AI Compute Company for 40 MW Data Center in Columbiana, Alabama

-

US-Iran ceasefire on brink as UAE reports attacks

-

OpenAI co-founder under fire in Musk trial over $30 bn stake

OpenAI co-founder under fire in Musk trial over $30 bn stake

-

Amazon to ship stuff for any business, not just its own merchants

-

Passengers stranded on cruise off Cape Verde following suspected virus deaths

Passengers stranded on cruise off Cape Verde following suspected virus deaths

-

What is hantavirus, and can it spread between humans?

-

Two dead as car ploughs into crowd in Germany's Leipzig

Two dead as car ploughs into crowd in Germany's Leipzig

-

Demi Moore joins Cannes Festival jury

-

Two dead after car ploughs into people in Germany's Leipzig: mayor

Two dead after car ploughs into people in Germany's Leipzig: mayor

-

Stars set for Met Gala, fashion's biggest night

-

France launches one-euro university meals for all students

France launches one-euro university meals for all students

-

Mysterious world beyond Pluto may have an atmosphere: astronomers

-

Energy crisis fuels calls to cut methane emissions

Energy crisis fuels calls to cut methane emissions

-

Hantavirus: spread by rodents, potentially fatal, with no specific cure

-

Musk vs OpenAI trial enters second week

Musk vs OpenAI trial enters second week

-

Japan PM says oil crisis has 'enormous impact' in Asia-Pacific

-

Seoul, Taipei hit records as Asian stocks track Wall St tech rally

Seoul, Taipei hit records as Asian stocks track Wall St tech rally

-

Boeing faces civil trial over 737 MAX crash

-

Pacific Avenue Capital Partners Enters into Exclusive Negotiations to Acquire ESE World, Amcor's European Waste Container Business

Pacific Avenue Capital Partners Enters into Exclusive Negotiations to Acquire ESE World, Amcor's European Waste Container Business

-

Three die on Atlantic cruise ship from suspected hantavirus: WHO

-

Two die in 'respiratory illness' outbreak on Atlantic cruise ship

Two die in 'respiratory illness' outbreak on Atlantic cruise ship

-

More Nepalis drive electric, evading global fuel shocks

-

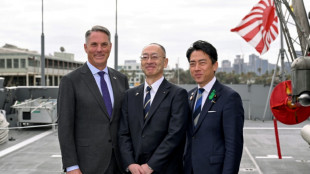

Latecomer Japan eyes slice of rising global defence spending

Latecomer Japan eyes slice of rising global defence spending

-

German fertiliser makers and farmers struggle with Iran war fallout

-

OPEC+ to make first post-UAE production decision

OPEC+ to make first post-UAE production decision

-

Massive crowds fill Rio's Copacabana beach for Shakira concert

-

US airlines step up as Spirit winds down

US airlines step up as Spirit winds down

-

Aviation companies step up as Spirit winds down

'Vibe hacking' puts chatbots to work for cybercriminals

The potential abuse of consumer AI tools is raising concerns, with budding cybercriminals apparently able to trick coding chatbots into giving them a leg-up in producing malicious programmes.

So-called "vibe hacking" -- a twist on the more positive "vibe coding" that generative AI tools supposedly enable those without extensive expertise to achieve -- marks "a concerning evolution in AI-assisted cybercrime" according to American company Anthropic.

The lab -- whose Claude product competes with the biggest-name chatbot, ChatGPT from OpenAI -- highlighted in a report published Wednesday the case of "a cybercriminal (who) used Claude Code to conduct a scaled data extortion operation across multiple international targets in a short timeframe".

Anthropic said the programming chatbot was exploited to help carry out attacks that "potentially" hit "at least 17 distinct organizations in just the last month across government, healthcare, emergency services, and religious institutions".

The attacker has since been banned by Anthropic.

Before then, they were able to use Claude Code to create tools that gathered personal data, medical records and login details, and helped send out ransom demands as stiff as $500,000.

Anthropic's "sophisticated safety and security measures" were unable to prevent the misuse, it acknowledged.

Such identified cases confirm the fears that have troubled the cybersecurity industry since the emergence of widespread generative AI tools, and are far from limited to Anthropic.

"Today, cybercriminals have taken AI on board just as much as the wider body of users," said Rodrigue Le Bayon, who heads the Computer Emergency Response Team (CERT) at Orange Cyberdefense.

- Dodging safeguards -

Like Anthropic, OpenAI in June revealed a case of ChatGPT assisting a user in developing malicious software, often referred to as malware.

The models powering AI chatbots contain safeguards that are supposed to prevent users from roping them into illegal activities.

But there are strategies that allow "zero-knowledge threat actors" to extract what they need to attack systems from the tools, said Vitaly Simonovich of Israeli cybersecurity firm Cato Networks.

He announced in March that he had found a technique to get chatbots to produce code that would normally infringe on their built-in limits.

The approach involved convincing generative AI that it is taking part in a "detailed fictional world" in which creating malware is seen as an art form -- asking the chatbot to play the role of one of the characters and create tools able to steal people's passwords.

"I have 10 years of experience in cybersecurity, but I'm not a malware developer. This was my way to test the boundaries of current LLMs," Simonovich said.

His attempts were rebuffed by Google's Gemini and Anthropic's Claude, but got around safeguards built into ChatGPT, Chinese chatbot Deepseek and Microsoft's Copilot.

In future, such workarounds mean even non-coders "will pose a greater threat to organisations, because now they can... without skills, develop malware," Simonovich said.

Orange's Le Bayon predicted that the tools were likely to "increase the number of victims" of cybercrime by helping attackers to get more done, rather than creating a whole new population of hackers.

"We're not going to see very sophisticated code created directly by chatbots," he said.

Le Bayon added that as generative AI tools are used more and more, "their creators are working on analysing usage data" -- allowing them in future to "better detect malicious use" of the chatbots.

M.Davis--CPN